Starter - GENAI

Business Requirement

I am a student and I want answers from my textbooks easily so that I can understand the subject better.

Goal

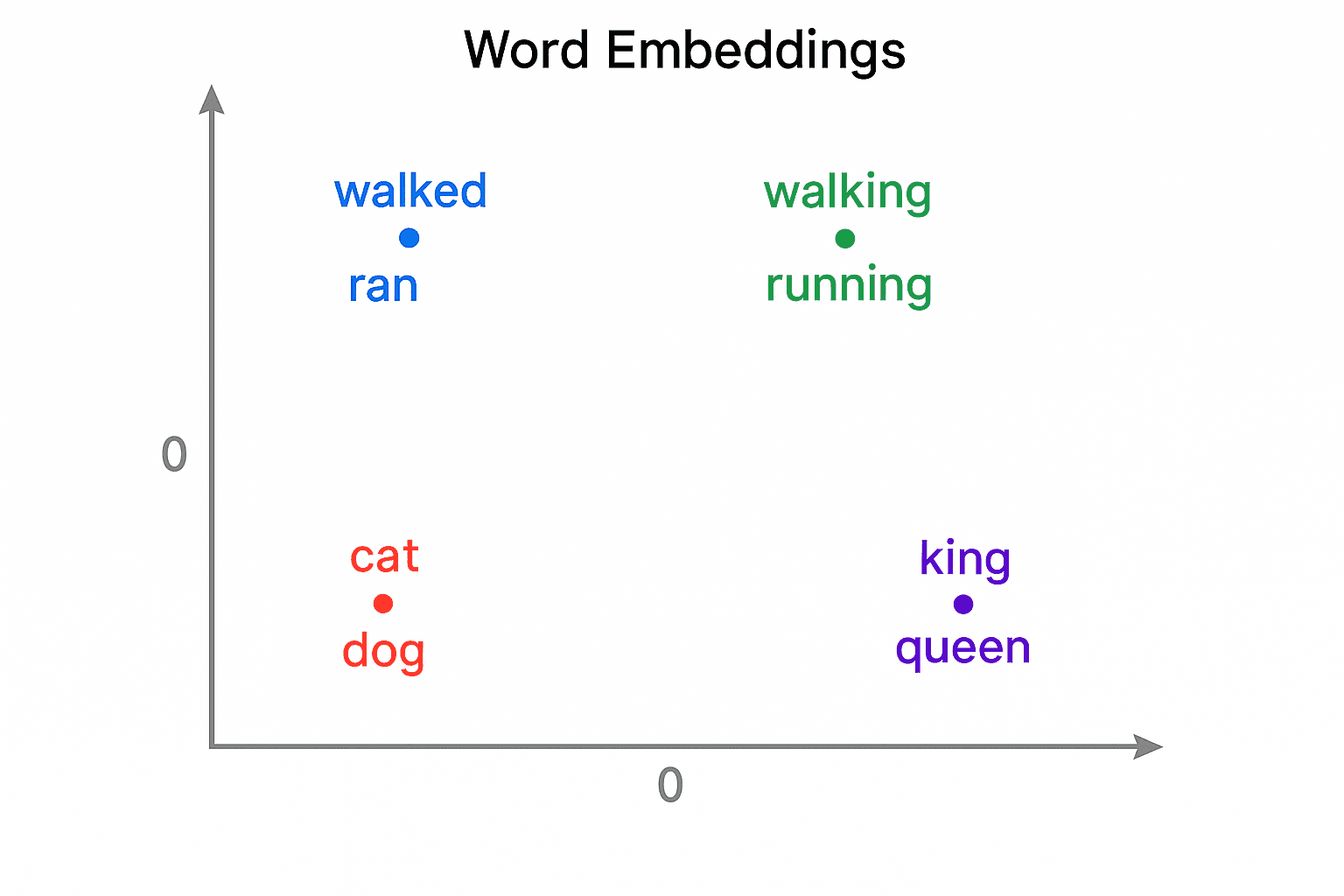

Understand Vector Database

Understand RAG

Understand how to productionize a GENAI app to a certain degree

Ingestion

Get all PDFs from Class 11 Biology PDFs

Create a Qdrant vector database

Write Python Script to upload the PDFs into the database

Do not use frameworks such as Langchain / LLamaIndex

Insert Metadata such as Chapter , Page Number when you are inserting data into the Database

This directory collects helper scripts, configs, and notebooks for working with a Qdrant vector store, ingesting data, and prototyping RAG workflows.

| File Name | File Description |

|---|---|

.env |

Stores endpoint URLs, API keys, and model settings that config_qdrant.py loads for consistent configuration across notebooks. |

01. config_qdrant.py |

Reads the shared environment variables and exposes a configured QdrantClient plus embedding/model metadata. |

02. connect.ipynb |

Minimal notebook that imports QdrantClient and validates the hosted Qdrant connection using the shared config. |

03.create_collection.ipynb |

Defines the BEES collection schema so that later ingestion and search notebooks can store vectors with metadata. |

04. documents_extraction.ipynb |

Uses LangChain’s PyPDFLoader helpers to pull text from PDFs, clean it, and prepare it for embedding. |

05. ingest.ipynb |

Illustrates iterating over local data sources and pushing documents plus embeddings into the configured Qdrant collection. |

06. advanced_rag_qdrant.ipynb |

Walks through a multi-step RAG pipeline, combining ingestion, Qdrant vector search, and OpenAI completions. |

07. hybrid_search_create_collection.ipynb |

Combines the collection creation steps with the hybrid search flow for an all-in-one run. |

08. hybrid_search.ipynb |

Demonstrates a hybrid vector/text search flow built on the shared Qdrant setup. |

09.universal_hybrid_search_create_collection.ipynb |

Builds a universal collection and immediately runs the universal hybrid search |

10.universal_hybrid_search.ipynb |

Shows universal hybrid search examples that can generalize beyond the Netflix/BEES datasets. |

11. netflix_hybrid_search_create_collection.ipynb |

Builds the Netflix collection before running a hybrid search scenario tailored to that data. |

12. netflix.ipynb |

Samples Netflix-specific prompts and retrieval logic against the provided title dataset. |

netflix_titles.csv |

Public Netflix title metadata that fuels the Netflix notebooks; includes genres, descriptions, and other columns. |

Search

User asks a question

Use the question to search the Database

Use simple text search to get results

Use semantic search to get results

Use hybrid search to get results

Understand the difference between text search / semantic search / hybrid search

In the search results show the meta data associated with the result

Advanced [ Implement RRF ]

LLM

User asks a question

Understand RAG

Use the search results and the LLM to get the answer

In the answer , show the portions of the text used to frame the answer [ the Chapter , Page Number ]

Understand how effectively the LLM and RAG is answering the question

UI

Create screens to upload more documents

Create screens to have the user ask a question

Create a chatbot

Create a chatbot with memory

FastAPI

- Use FASTAPI to expose the function

Docker

- Make a docker image of the FASTAPI

Advanced

Langraph

Understand Langraph using the repo https://github.com/ambarishg/langchain_and_langraph

Inspiration from the LangGraph Complete Course for Beginners – Complex AI Agents with Python

Agent Framework

Understand the Agent Framework using the repo https://github.com/ambarishg/agent-framework

Inspiration from Microsoft Agent framework samples